Beyond basic functional modeling: adding planning and control processes

The more comprehensively the software application delivery systems are modeled, the more value be applied to their processes. A comprehensive model may ultimately be fed into a simulator, where experiments with parameters will locate hidden optimizations. Thus, today’s topic extends knowledge on modeling: going beyond basic functional modeling for a system and adding functional modeling for planning and control.

We recently touched on how to create a functional model and then how to align functional models with process improvement. In these first forays into functional modeling, we created a model that encompassed the execution phase of software activity.

Triple Diagonal Modeling

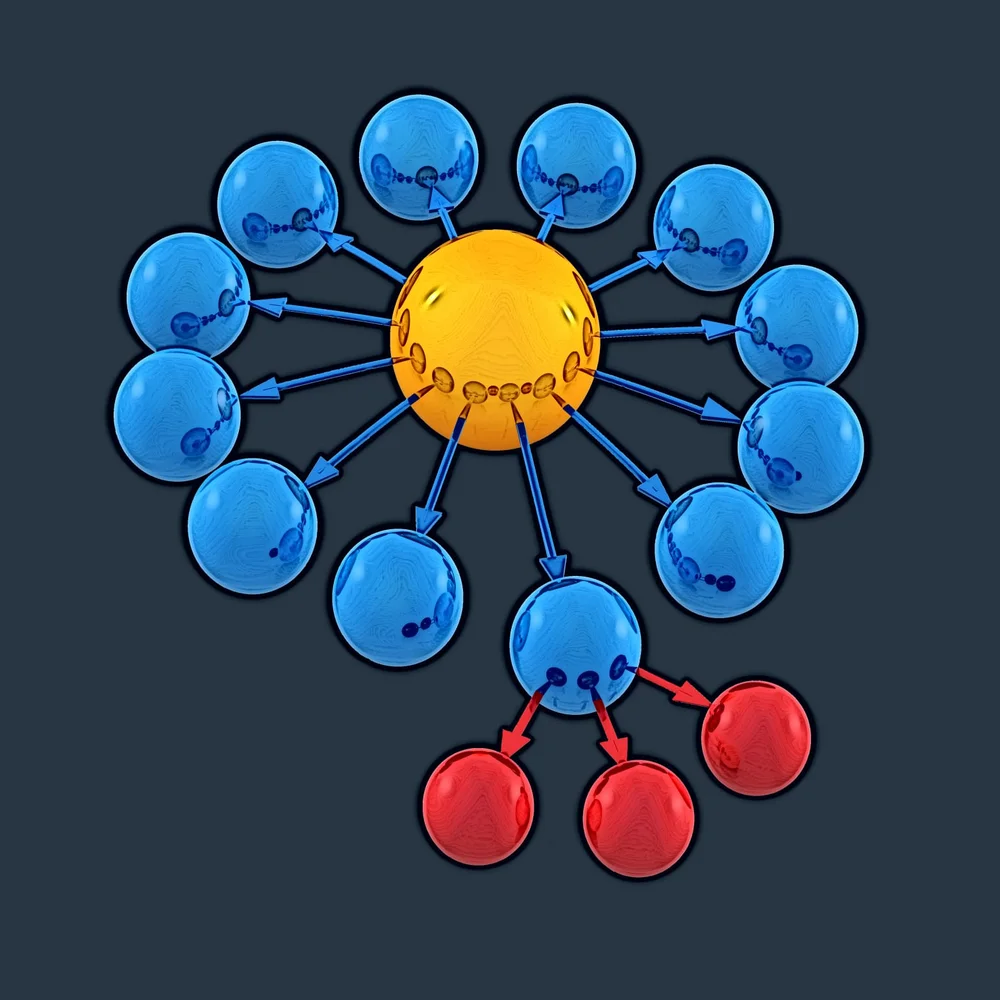

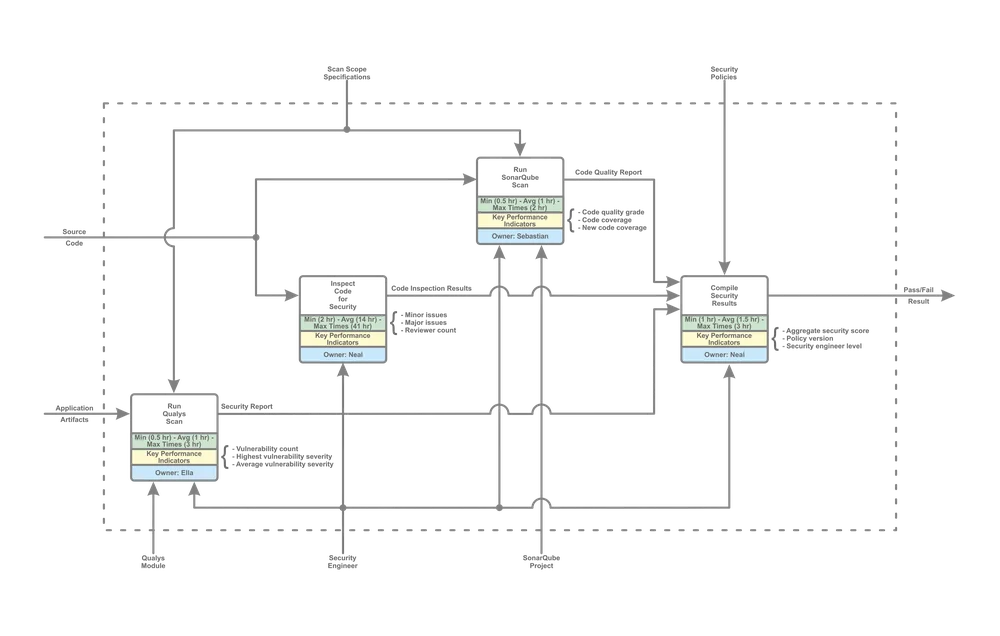

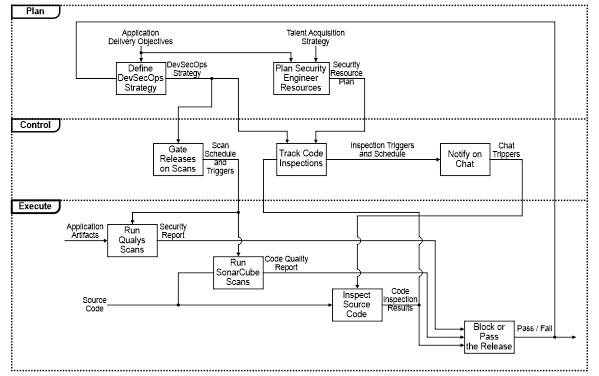

Consider the following basic (execution) functional model for a DevSecOps process:

A known technique to improve productivity in business processes is dubbed Triple Diagonal modeling, which can be applied to support software application delivery systems. Triple Diagonal modeling is stemmed from ICAM (Integrated Computer‐Aided Manufacturing) Definition Language (IDEF 0), which originated in the USAF in the mid-1970s and is often used throughout Department of Defence projects.

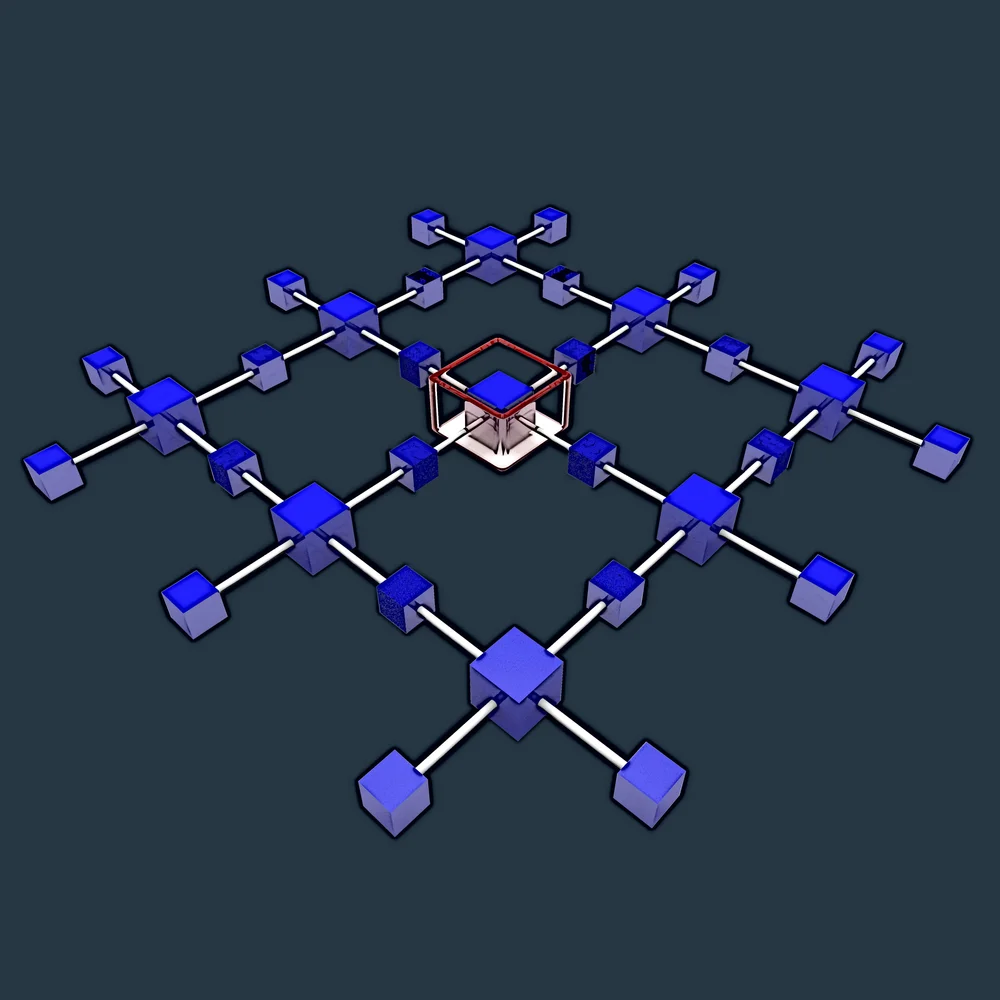

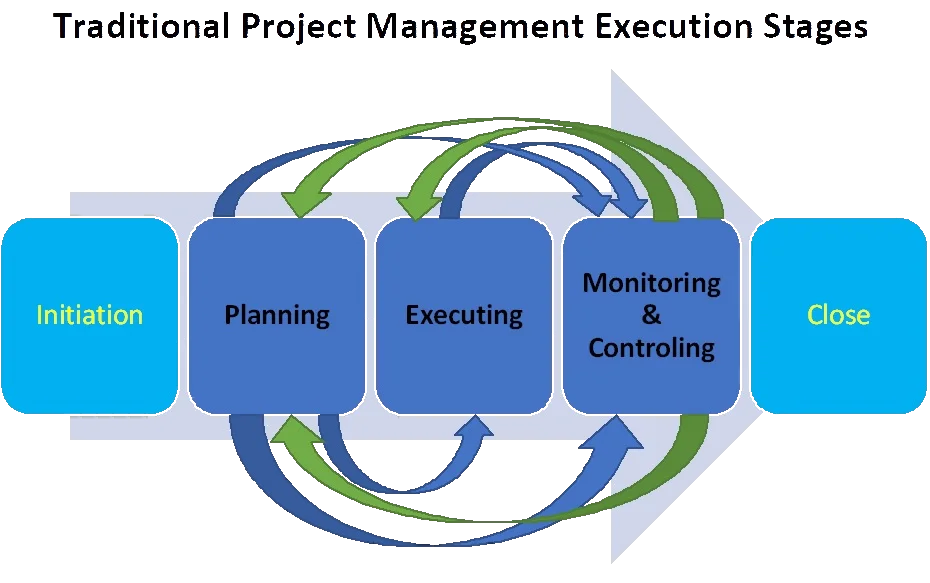

This modeling encompasses three distinct stages associated with the Traditional Approach to Project Management: execution, control, and planning (see Figure 2).

In software delivery systems, we generally take the approach to build our functional modeling “from the bottom up,” first with execution, then by outlining control and planning structures. As we already have this first execution level, we can build additional layers on this model by adding control and planning functions. We start with basic modeling rather than functional modeling at this point.

Consider the information, product, and cash flows at the low levels of execution (e.g., security scans or artifact builds). Triple Diagonal modeling encompasses an eight-step process (Levine & Villareal, 1993, p. 2-3):

- Determine the Basic Flow of the Product’s Process—Identify the scope and boundaries;

- Determine the Material Flow—Identify the basic inputs and outputs of each execution level function;

- Add Control Feed-forward—Identify control functions and note outputs from control functions;

- Add Control Feedback—Identify information outputs from the execution level that serve as inputs to control functions;

- Add Planning Feed-forward—Identify planning functions and note the outputs from one planning function that are used as inputs to another planning function;

- Add Planning Feedback—Identify outputs of planning functions that can serve as control feedback to other planning functions;

- Quantify the Material Flow—Identify important quantitative measures of resource consumption and performance; and

- Quantify the Information Flow—quantifying the resources consumed in each control and planning function, determining cycle times to interrogate processing and decision-making, and evaluating the information flows’ quantity (accuracy).

Plan, Control, Execute

We can then develop the functional models for the control and planning operations from the above basic model. The result of these functional models is a framework for value delivery, all the way through from strategy and planning to execution. To bring these models to life, modelers should include metrics about headcounts, times, quality, etc.

The Next Step: Digital Twins

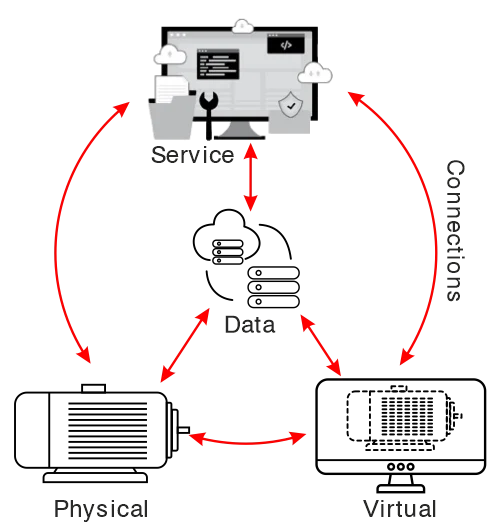

With the right data in place, these models can also be simulated. These models and simulations will evolve with the organization and can be a source of discovery, analysis, and design – in effect, essential living models and tools for growth. This evolution is being expressed currently in terms of a digital twin, an emergent concept mapping reality to a digital model and simulation, most notably in product design.

Greives originated the digital twin concept in his 2003 product development lifecycle course (see Figure 4). In 2014, Greives defined the digital twin as a “virtual representation of what has been produced” (title page). Later, NASA defined the digital twin as “a multiphysics, multiscale, probabilistic, ultrafidelity simulation that reflects, in a timely manner, the state of the corresponding twin based on the historical data, real-time sensor data, and physical model” (Fei, et al., 2018, p. 8).

The digital twin is a simulation encompassing the following characteristics (Falekas & Karlis, 2021, p. 4-5):

- Multiphysics, meaning cooperation of different system descriptions, such as aerodynamics, fluid dynamics, electromagnetics, tensions, etc.;

- Multi-Scale, the digital twin simulation should adapt to the required depth in real-time. Users can zoom in to the component of a component, up to a complete view of the digital twin;

- Probabilistic, based on models derived from state-of-the-art analyses on each building block, to predict the future and follow the same description protocol as the real twin;

- Ultrafidelity, offering unlimited precision down to the lowest possible level. This is, of course, a compromise, often a tradeoff for computational power and time.

Digital twins will be referenced occasionally by other blog entries in the future.

References:

Fei, T., Jiangfeng, C., Qinglin, Q., Zhang, M., Zhang, H., & Fangyuan, S. (2018). Digital twin-driven product design, manufacturing and service with big data. International Journal of Advanced Manufacturing Technology, 94(9-12), 3563-3576.

Falekas, G., & Karlis, A. (2021). Digital twin in electrical machine control and predictive maintenance: State-of-the-art and future prospects. Energies, 14(18), 5933, 1-26.

Grieves, M. (2014). Digital twin: manufacturing excellence through virtual factory replication. White paper. Florida Institute of Technology, 1-7.

Levine, L. O., & Villareal, L. D. (1993). Triple Diagonal modeling: A mechanism to focus productivity improvement for business success (No. PNL-SA-21413; CONF-9309168-2). Pacific Northwest Lab.